A History of Intelligence

What evolutionary steps powered our potent cognitive capacities? Does understanding this history of the brain help us build artificial intelligence?

We humans give way too much importance to language and symbols as the substrate of intelligence. Primates, dogs, cats, crows, parrots, octopus, and many other animals don’t have human-like languages, yet exhibit intelligent behavior beyond that of our best AI systems. What they do have is an ability to learn powerful “world models” that allow them to predict the consequences of their actions and to search for and plan actions to achieve a goal. The ability to learn such world models is what’s missing fromm AI systems today.

~Yann LeCun, head of AI at Meta

Much has been made of the literary ambition to write the “Great American Novel,” but less attention has been paid to a parallel race in nonfiction. Maybe this is because “a sweeping scientific narrative of human history” or “the Great Syntopical Book” don’t exactly roll off the tongue. Regardless, the lack of a snappy label has done little to dull the competition. Pinker, Diamond, Harari, and others have all been furiously typing away and pouring through vast bodies scientific literature and research. Almost every year we’re greeted with new titles in this mold, and I’m definitely a sucker for these books so I tear through as many as possible. My latest is A Brief History of Intelligence: Evolution, AI, and the Five Breakthroughs That Made Our Brains by Max Solomon Bennett.

Bennett and his publisher are wise to the fact that A Brief History of Intelligence sits squarely in the ambitious "big history" genre, billing it as “equal parts Sapiens, Behave, and Superintelligence, but wholly original in scope.” However, consciousness of this tradition necessitates carving out a particular niche. So instead of recapitulating all of human history (Sapiens), illuminating the socioeconomic and technological success of the West (Guns, Germs, and Steel, Why Nations Fail, The WEIRDest People in the World), revealing the true trajectory of political history (The End of History), or explaining the deep and intricate genetic history of man (Who We Are and How We Got Here), Bennett treats readers to a comprehensive account of the evolutionary breakthroughs that originated human-level intelligence. Bennett layers on an additional topical angle, artificial intelligence, where the framing places organic intelligence in dialogue with artificial intelligence. The comparison provides a reasonably fresh perspective on a capacious and cross-disciplinary yet somewhat stock scientific history. So while I can’t say that Bennett’s book completely transcends the popular science trap, it at least aims to be an exemplary entry in the space.

Intelligence Emerges: Five Breakthroughs

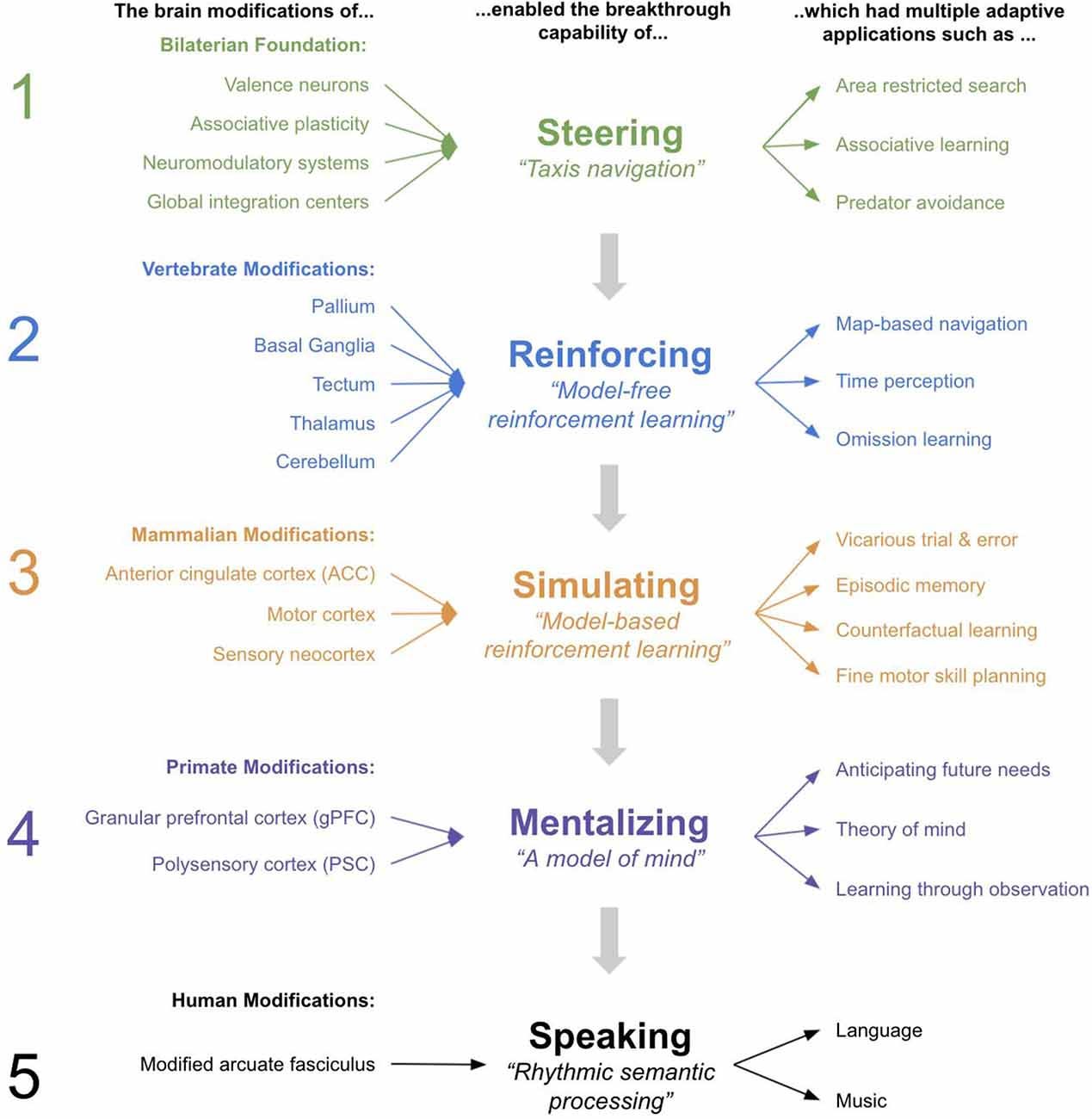

As the title indicates, the book is organized by five evolutionary breakthroughs that Bennett alleges account for human intelligence. In short, these adaption are steering, reinforcement, simulating, mentalizing, and language.

Breakthrough #1: Steering in Early Bilaterians

550 million years ago (mya), there was an evolutionary shift from radially symmetric bodyplans with networked nervous systems (e.g. coral polyps) to bilaterally symmetric bodyplans with centralized nervous systems (e.g. nematodes). These latter organisms are grouped as bilaterians and include us humans.

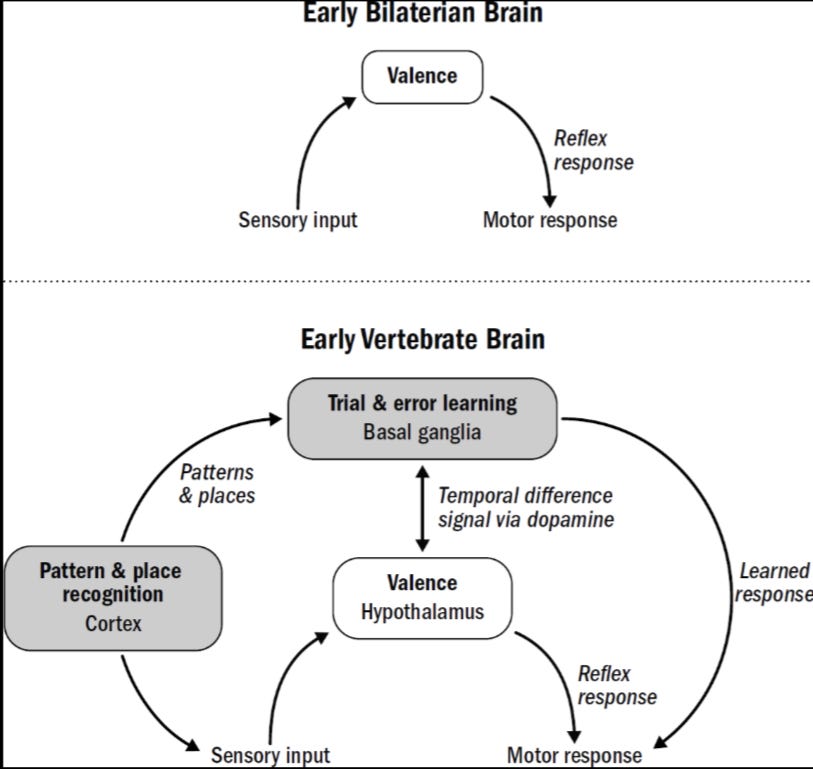

Being a bilaterian means navigating the world via steering: forward, reverse, right, or left. Knowing where to go necessitates an ability to assess environmental cues and respond with the appropriate movement, thus, a paired sensory and motor system. Subsequent navigational action is informed by two inputs to this rudimentary brain: an organism’s internal state (i.e. hunger) and the valence (good vs bad) of external stimuli (food here vs danger here). In order to understand and respond to the valence of internal and external cues, the basic nervous systems of bilaterians also needed a way to modulate their sensory-motor circuits. Hence, the dopamine-serotonin system emerged to monitor and direct the sensory-motor circuitry, allowing bilaterians to contextually exploit or enjoy based on affect and stimuli.

This system is quite simple. It is nearly reflexive associative learning. Exploit pleasant environments when activated. Escape unpleasant environments when activated. Hunker down when beleaguered. Rest when satiated. These strategies are physically embedded into the circuits that coordinate these actions. However, the world is complex and sends an abundance of different, mixed signals. Evolutionary success thus depends on being able to come to a type of decision about which cues and states are more important than others. This is the fundamental challenge of associative learning, “the credit assignment problem.” Bennett posits four basic strategies for an associative-learning-based nervous system to solve credit assignment problems: eligibility traces, overshadowing, latent inhibition, and blocking. These amount to responding cues that are closer in time to rewards, paying attention to the strongest cues, treating stimuli common to and consistent in the environment as noise, and ignoring distracting stimuli after an association has been established. The mechanisms of associative learning are intuitive with respect to the basic physiology of the synapse of neurons - “nerves that fire together wire together.”

It is somewhat awe-inspiring to consider the problems that two systems of nerve circuits (sensory-motor and neuromodulation) can solve. There are limitations to this design, especially with respect to neuromodulation, that still plague us today: addiction, chronic stress, and depression. Nonetheless, the system necessary for steering a bilateral body paved the way for a brain that could learn.

Breakthrough #2: Reinforcement Learning in Early Vertebrates

Sometime during the biological effervescence of the Cambrian period (540-485 mya), the vertebrate (or backboned) body template emerged. At this stage, many of these creatures were quite fishlike. And it was here were the brain began to differentiate into three structures that still shape its anatomical organization today: forebrain, midbrain, and hindbrain.

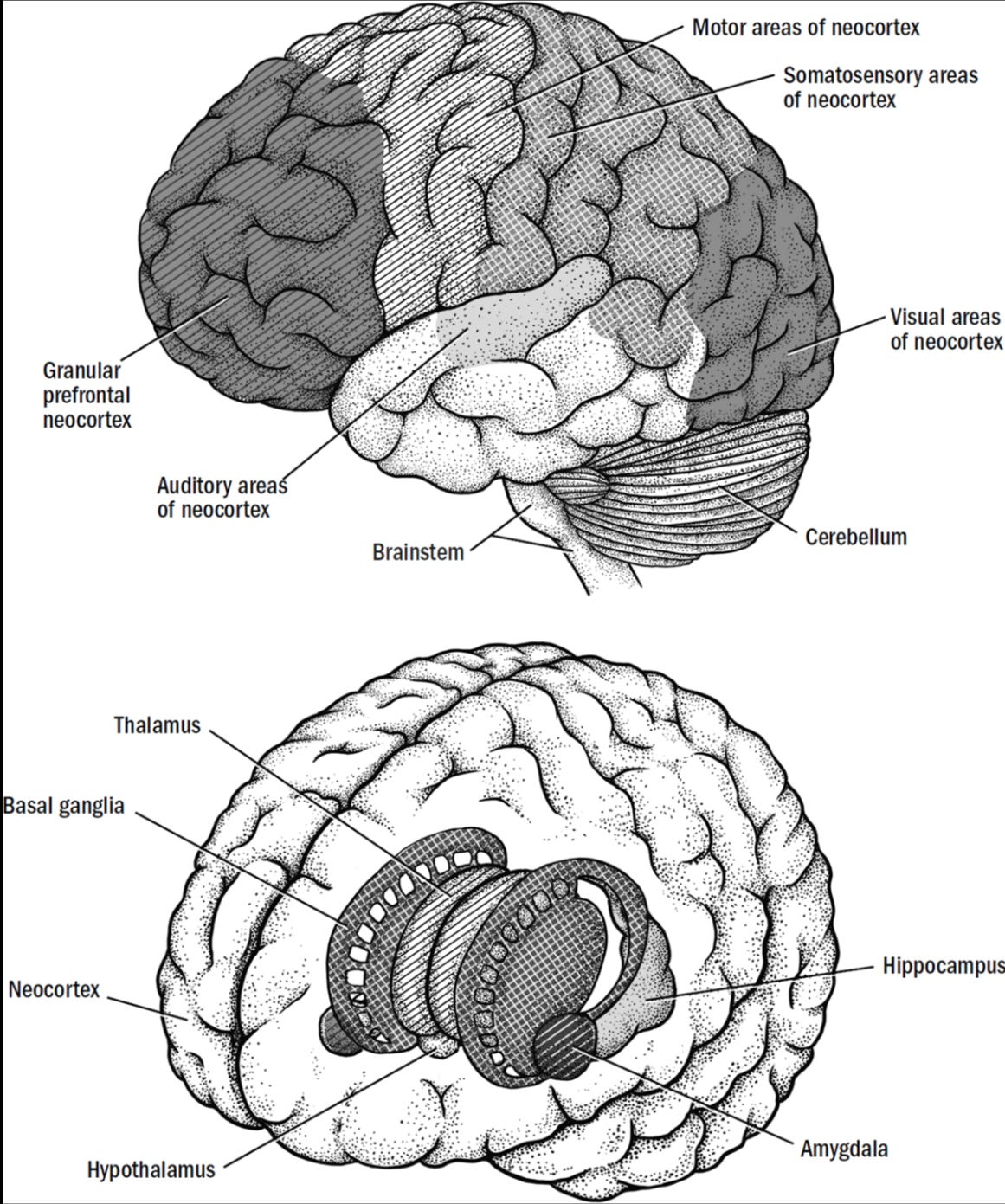

Thanks to the work of behaviorists like Edward Thorndike, Ivan Pavlov, B. F. Skinner and others, we know that organisms with this tripartite brain template exhibit a type of trial-and-error learning that responds not just to rewards but cues associated with rewards. This learning via reinforcement allows for arbitrary sequences of actions to be followed to achieve various goals, like solving a puzzle box to get food. An important forebrain structure called the basal ganglia has been demonstrated to be important in mediating this reinforcement learning. Eventually, another forebrain structure, the cortex, evolved to enhance the pattern recognition on which more advanced reinforcement learning depends. The dependence on pattern recognition also elaborated other forebrain structures like the hippocampus, which is crucial for perception of three-dimensional space.

The big challenge that the fledgling forebrain faced was how to act when the reward sought requires a complex sequence of actions to reach. Alternatively, how can reinforcement take place when action and feedback are interceded on by time. This is the problem of “temporal difference learning.”

Bennett posits that the solution to the problem of temporal difference (TD) is solved by an actor-critic system (sometimes referred to as bootstrapping) in the brain. The actor acts and the critic critiques. The latter rewards actions predicted to be beneficial and punishes actions predicted to be detrimental. Over time the critic shapes the actor, ultimately improving the likelihood of achieving the ultimate aim. An important part of the success of this system is curiosity. The actor needs to want to explore the available environment just because. Otherwise, action only guided by cues with association won’t be enough to solve complex problems.

The proof-of-concept for the actor-critic model can be found in Richard Sutton’s work on artificial intelligence and its application in game scenarios. In 1994, a young physicist named Gerald Tesauro built program called TD-Gammon, which leveraged Sutton’s bootstrapping insights on TD learning, that could compete with humans at backgammon. This was a stunning feat for the time. Today, many AI systems rely on TD learning like self-driving cars.

Breakthrough #3: Mental Simulation in Mammals

Sometime during the reign of the dinosaurs (250-150 mya), a period of intense predator-prey competition, small mammals evolved along with a new cognitive ability, simulation. The advantage of simulation was being able to test trial-and-error outcomes vicariously and establish a causal model of the world. Obviously, the ability to game out events before they occur required numerous adaptations: far-ranging vision (observation), warm-bloodness (perpetual activity), and a neocortex (prediction).

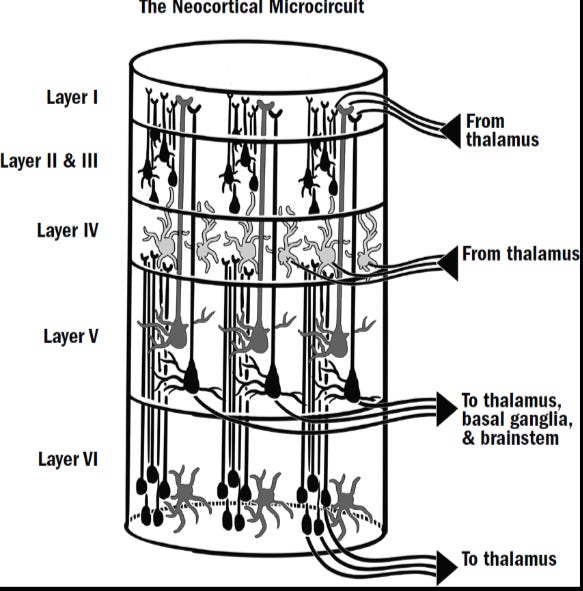

The big question given simulation is how it occurs in the neocortex. Bennett argues that the sensory neocortex evolved to generated a model of the external world in concert with other neocortical circuits. This is yet another bootstrapping step that enables the neocortex to learn without having to actually act on the world. An early mammal under intense predation benefitted from simulating actions that could provide survival benefit. Its model of the world allowed it learn vicariously by trial-and-error, test counterfactuals in order to understand causes, and develop episodic memories.

Breakthrough #4: Modeling Minds in Early Primates

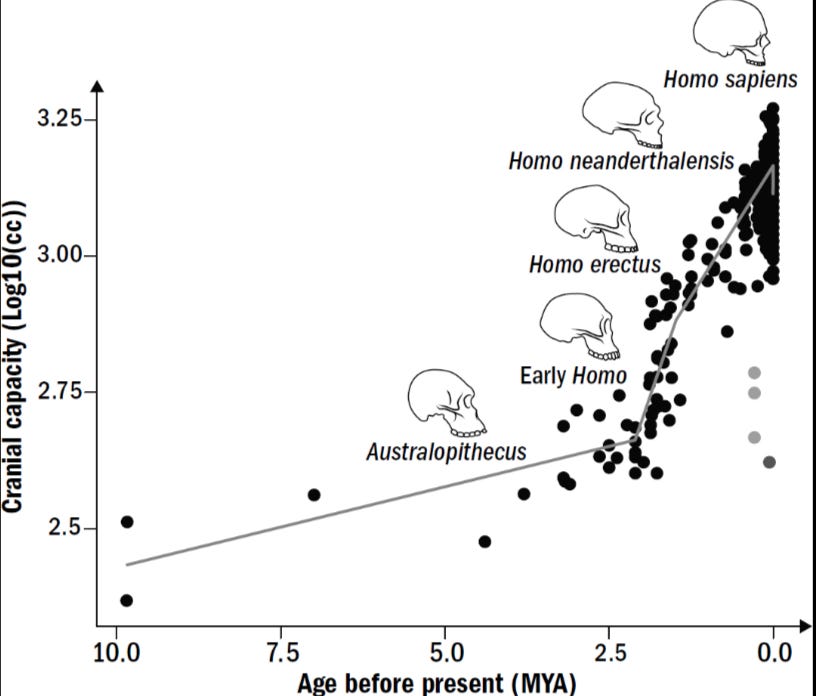

In the 80s and 90s, a collection of primatologists and evolutionary psychologists, namely Nicholas Humphrey, Frans de Waal, and Robin Dunbar, developed the social-brain hypothesis. It predicted that that the larger the neocortex in a primate the larger that primate’s social group. This correlation has been confirmed empirically and suggests something about why human brains are so large and how that growth came about.

Primate politics are quite Machiavellian. The environment is unforgiving and primate groups often have to coordinate complex tasks and teach new additions. The inevitable tradeoffs promote particular hierarchies. These social hierarchies help coordinate social action. However, they also create competing tensions. This proto-politics ostensibly makes it advantageous for individuals to understand the minds of others in their group. It’s clear that navigating the fraught dynamics of competing individual and group interests within larger and larger groups selects for brains that can mentalize, i.e. model themselves and others.

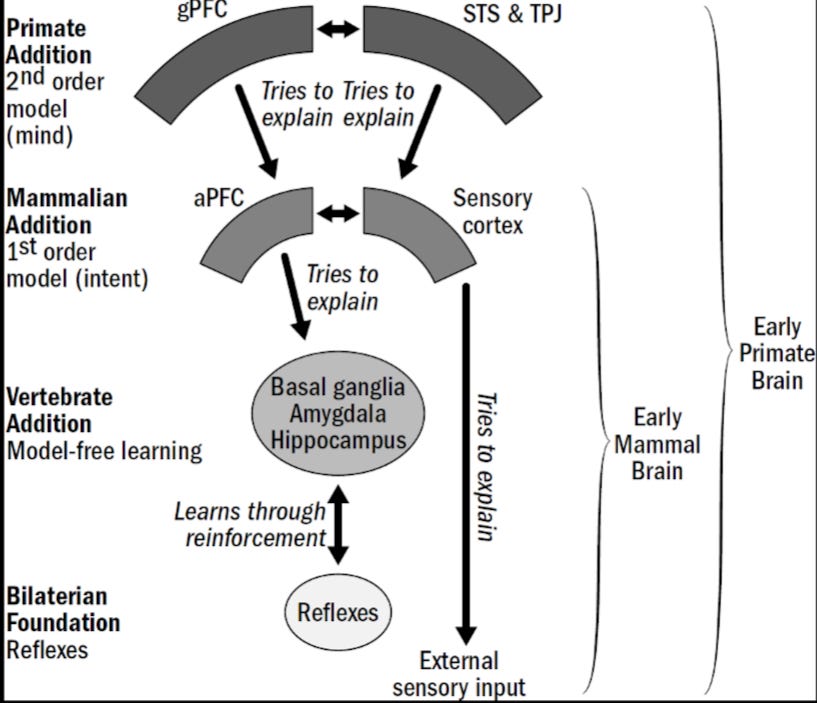

Mapping a mind is hard. AI certainly can’t do it. How do we? Bennett argues that mentalization arises from the complex interplay between the sensory cortex (our world model) and two other regions of the neocortex, the granular prefrontal cortex (gPFC) and the agranual prefrontal cortex (aPFC), which generates our self model. Bennett argues this system is generative, meaning that it is a prediction machine that is shaped (or fine-tuned) by the reciprocal circuitry that is continuously responding to self and external inputs and feedback. Moreover, having a theory of mind improved the ability of early primates to learn via imitation and anticipate future needs - something that was important given their frugivore diets.

Breakthrough #5: The Language Instinct in Early Humans

The last breakthrough on the road to human-level intelligence is spoken and written language. Bennett frames our unique communicative ability as the result of a simple scaling up of the primate brain that was pushed by a competitive ratchet driven by the social dynamics of growing primate groups. Bennett points out that human intelligence may not be so distinct afterall - something Darwin also considered:

Darwin believed that “the difference in mind between man and the higher animals, great as it is, is certainly one of degree and not of kind.” What intellectual feats, if any, are uniquely human is still hotly debated among psychologists. But as the evidence continues to roll in, it seems that Darwin may have been right.

Just like self-modeling, Bennett argues that language was an adaptation selected for by social group dynamics and a need to transmit skills and ideas transgenerational. He points to cooking, parenting, and a cycle of altruism and free-riding monitored by gossip and policed by cruelty as the pressure driving the human language instinct. However, Bennett concedes that evolutionary theory of language are many and diverse; no consensus model exists among scientists today. Although we know that language, especially written, has been critical to the cumulative advancement of humanity via the maintenance and growth of knowledge and cultural institutions, it is a capability whose true origins we know little about.

Large Language Models and AI’s Future

After identifying the importance of human language, Bennett naturally explores the power, promise, and limitations of the large language models (LLMs) that are dominating today’s headlines about AI.

Bennett expresses admiration for the power of LLMs like GPT-3 and their ability to essentially reason about the physical world without actually experiencing it. However, he highlights how limited LLMs like GPT-3 are in terms of simulating and mentalizing tasks that even some nonhuman primates can do.

Bennett is clear that LLMs won’t be the ultimate route to human-like intelligence, but they could be a window into a human-level AI. Bennett seems implicitly sanguine about the prospects of achieving artificial general intelligence. His choice to analogize AI mechanisms with those of the human brain suggests that he thinks the brain’s powerful capacities can be entirely recapitulated without the same evolutionary trajectory. Unfortunately, this is not something Bennett comments on explicitly or at length. Spotlighting the shortcuts that have been found is Bennet’s goal.

What to Make of Bennett’s Narrative?

When it comes to the five evolutionary breakthroughs, Bennett's insights are not mind-bogglingly clever or original. He maps a fairly straightforward linear trajectory that fits within current paradigms. He describes the what and when of anatomical and behavioral adaptations, pointing to the probable selection pressures responsible. Many hard-working undergraduates in evolutionary biology will have come to similar conclusions during their coursework and reading. And many gifted scientists and writers have told this story before or at least many parts of it. The true yield from the book is embedded in the interludes on artificial intelligence. These recount infrequently told (popular) histories of AI development and present concepts from AI in a synthetic, revelatory perspective that demonstrate the substrate independence of intelligence. Evolution solved a problem that enabled certain problem-solving abilities in us. We have solved problems in similar ways to advance AI. The deep and persuasive confirmation of this insight will energize interest in AI development and our own brains.

I don't want to sell Bennett short, A Brief History of Intelligence is a real accomplishment, especially for a science writer without advanced training (Bennett is an entrepreneur in AI). But Bennett's prose lacks the verve and panache of many of the other sweeping works that his competes with. This isn't particularly surprising given this is a debut work, and Bennett is young. However, he does deliver an eminently digestible narrative that’s tightly organized. He tames what in reality is an unwieldy subject, and he does so without any glibness or embarrassing oversimplifications—weaknesses to which his eminent rivals are prone. His clear strengths are organization and distillation. He drills down to essential insights from various literatures and draws out clever and direct parallels to AI. It's shoe-leather science communication that many will enjoy and from which many will benefit. For those interested in the capacity of the brain and the origins of intelligence, Bennett has saved readers a great deal of time by assembling this book.

The clarity and confidence Bennett exudes early in the book wanes as the reader works through the final breakthroughs. Some of this is because the science grows quite a bit more ambiguous and complex. Plus, the book would have definitely benefitted from deeper exploration of neural net algorithms and LLMs. We get some delicious appetizers, but a full entrée on these topics is warranted in a work like this. Another important omission is imprecision about the concept of intelligence. Bennett treats it capaciously and doesn’t reflect on exactly what it is and how it’s constituted beyond simply being able to solve problems. This is strategic given the difficulty of the question. Nonetheless, I really enjoyed the comprehensive recap of central nervous system evolution and capabilities. I'm happy to strongly recommend this work to anyone interested in neuroscience, AI, and big idea books.

Bennett assumes there is intelligence on Earth.