All Bayes Everything

Subjective beliefs can offer a path to decreasing prediction error. This is the power of Bayes' Theorem. Plus, our flawed brains may be natural Bayesian operators.

It is quite likely that you’ve stumbled across the commentary of some egghead who kept referring to his or her “priors” and whether the latest such and such has caused them to “update” those priors.1 If this is the case, you already have some exposure to Bayesian thought. It is not as esoteric as the name or as any eponym preceding the word “theorem” typically seems. In fact, it is probable that you have been doing an intuitive and informal version of Bayes’ Theorem to make decisions your whole life.

As with anything in statistics and probability, a lot rests on very subtle turns of language and the application of abstract concepts that vary in their relative intuitiveness. Fortunately, the science writer and podcaster Tom Chivers (who can be found here at Substack) has authored just the book to help us get a deeper grasp of what Reverend Thomas Bayes figured out in the 18th century. Chivers’ Everything is Predictable: How Bayesian Statistics Explain Our World delivers a concise tour the eponymous body of thought, including its historical origins, its controversial place in statistics, its applications in various disciplines, and its possible embeddedness in our (neuro)biology. Chivers’ book is such a treat that I feel compelled to provide readers with a respectable preview of the contents as well as offering some of my own commentary.

What is Bayes’ Theorem?

As my introduction has made clear, I think even the most statistically and mathematically averse reader is likely to have an intuitive grasp of the ideas central to Bayes’ Theorem. Because of this, I think readers can handle an actual description of the formal theory. However, I won’t settle for the formal description as anyone can go and Google that on Wikipedia. Instead, I will also reformulate things in plain language and give an example.2 This is something that Everything is Predictable accomplishes impeccably. Chivers communicates high-level statistical concepts with incredible clarity and shows all his work when applying the theorem to real-world examples. I will do my best impression.

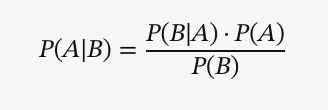

Bayes’ Theorem is a fundamental concept in probability theory that describes the probability of an event, based on a prior understanding of conditions that might be related to the event. It provides a structured way to incorporate new evidence into our existing understandings via iteration. In essence, Bayes’ Theorem is a mathematical formalization of inductive reasoning.

Bayes’ Theorem is expressed as:

Where:

P(A|B) is the posterior probability: the probability of hypothesis (A) given the data (B).

P(B|A) is the likelihood: the probability of data (B) given that the hypothesis (A) is true.

P(A) is the prior probability: the initial probability of hypothesis (A), before considering the data (B).

P(B) is the marginal probability: the total probability of data (B) under all possible hypotheses.

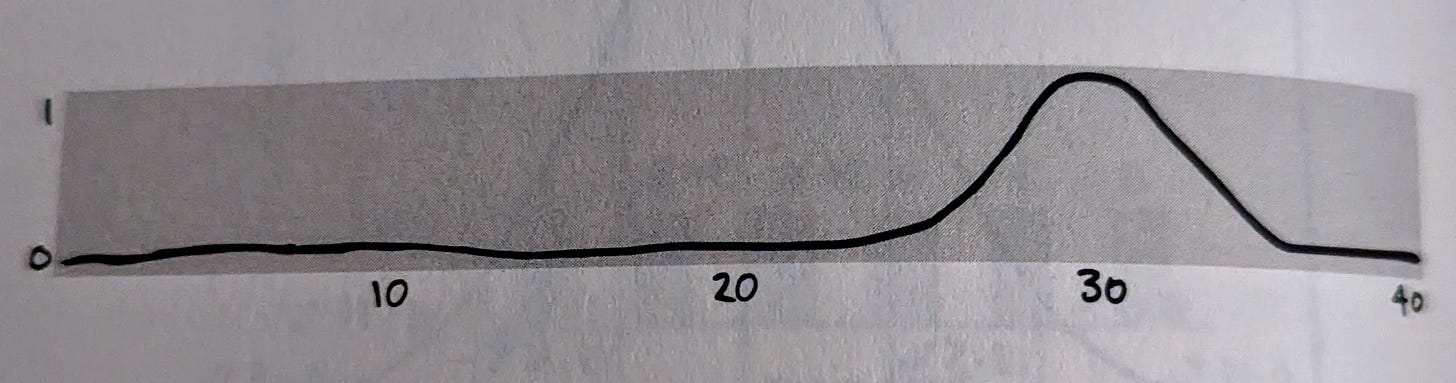

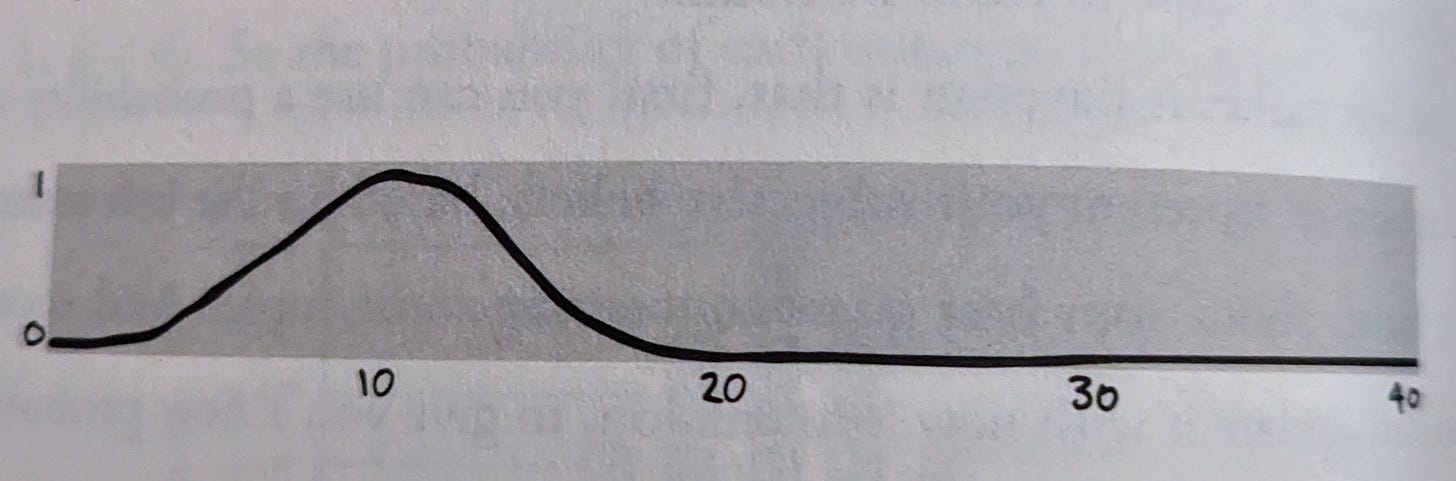

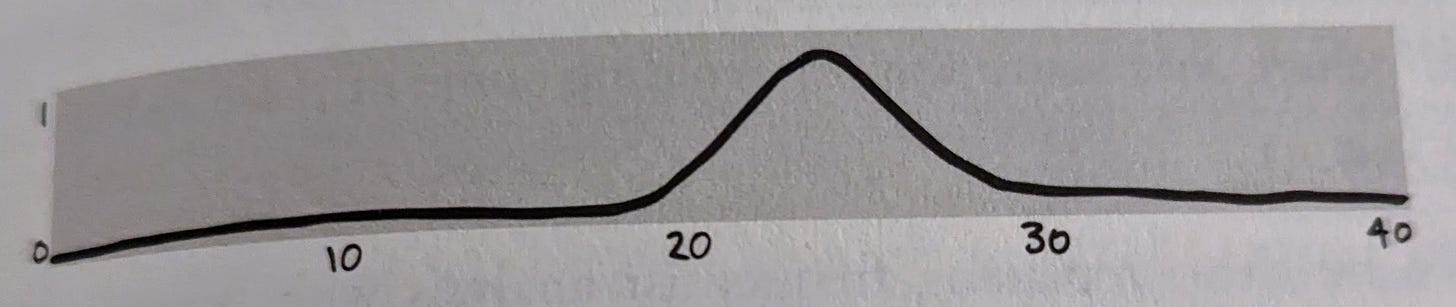

The crucial insight is the probability of your event of interest is simply your belief in its probability multiplied by the likelihood of the event based on observed data concerning that event. It is best to think about it as combining two probability distributions - the prior curve and the likelihood curve (shown below). The resulting distribution is your posterior curve and should be your best understanding of the probability of your event of interest. And you guessed it! The posterior becomes your new prior when you start the process over. This is the “updating” process I referenced earlier.

From what I’ve explained thus far, it is hopefully clear that your subjective starting point is quite influential, especially if it’s extreme. Good Bayesianism isn’t necessarily agnostic about that subjective start. For Bayesianism to work efficiently in reality, it is often important to choose good priors even in situations where one’s completely or largely ignorant. Hence, Chivers dedicates a solid portion of Everything is Predictable to just how one can do that.

The subjectivity inherent to Bayes’ Theorem is perturbing to many. It may seem like a weakness to you too, but it is also a great strength. The subjectivity enriches the information content of data and enables the powerful updating process. Theoretically, even in the scenario where our prior beliefs are completely bonkers, if we collect enough data over time we’ll inch toward more accurate predictions about the world. Chivers points out that how much we adjust our prior should be proportional to how much of an informational surprise is contained in our observed data. In other words, if we observe an event that we thought was extremely unlikely we should update our prior more than if we observe an event that we expected to see.

There are many contexts where Bayes’ Theorem is especially handy. High impact examples can usually be drawn from medical diagnostic testing. Chivers does just this to open his book, using breast cancer screening. In the example, Chivers asserts that breast cancer screening has a sensitivity of 80% (i.e. the true positive rate) and a specificity of 90% (i.e. the true negative rate). Standing on their own these numbers sound good, but Chivers shows that if the base rate of cancer in the tested population is low - say 1%, the prior in this case, then false positives will end up overwhelming the number of true positive. The actual chance of the breast cancer screen detecting a real case of breast cancer in this low risk population would be just 7%!

A Resilient Insight

Maybe the above example gave you enough of a shock, but maybe not. You may still be asking yourself, “If Bayes’ Theorem is so important and useful, why wasn’t I taught it in high school or college?” You may already be worried that it is either harder or less important than advertised. This is a good question to ask, and based on Chivers’ book, I think I’ve finally come around to answering this question satisfactorily for myself.

Previously, I figured Bayes’ Theorem was simply on the outs when it came to statistics in science - known, useful in special cases but otherwise beyond usual methods. I thought it was some obscure insight resurrected from history and championed by a coterie of nerds on the internet.3 Given the lack of Bayes in my formal education, I initially thought that it was otherwise seen as not particularly relevant or useful to research science.4 In the day-to-day practice of most labs, this is (perhaps unfortunately) somewhat true. When talking about the use of statistics in most research science, which is how most of my exposure to statistics has come, Bayesian statistics is not the norm.5 It is somewhat uncommon and in some places regarded with suspicion.6 However, it turns out there is a lot more to the notable absence of Bayes. Part of it has to do with the contingencies of history - the intellectual, personal, and technological variety.

The first chapter of Everything is Predictable speed runs a biography of Thomas Bayes (we don’t know much about him unfortunately) and the history of probability and statistics. Despite this perhaps dry sounding description of contents, the history of statistics is a lot sexier than you thought… or at least it is yoked to a certain type of degeneracy, gambling. The funny thing is many of these dissolute European gentleman with a fancy for dice and cards happened to have some genius friends (e.g. Blaise Pascal), or dabbled with number themselves. Chivers follows how the quest for an edge at the table led to basic statistical discoveries like the law of large numbers.7

This of course isn’t the whole story either. So far as we know, Bayes wasn’t a degenerate himself. In fact, from what we do know, he seems to be quite the opposite. He was a dissenter from the Church of England and a math hobbyist who hobnobbed with many brilliant thinkers. It appears Bayes’ understanding of inferential or inverse probability (of which Bayes’ Theorem is a major type) matured when he completed a peer review of a scientific paper on measurement error in astronomy. Shrewdly, Bayes got to the central question of interest here, “How likely is it that a hypothesis is true, given this data?” This is exactly the converse question of what is usually asked by scientists, “How likely am I to see this data given this hypothesis?”

Chivers recounting of the relevant history of statistical thought also illustrates that despite Bayes formally getting to the point first, he wasn’t the only one to make this discovery. As mathematical and statistical approaches matured, many thinkers re-derived similar insights (e.g. Simon LaPlace) independently in different contexts. The important thing is that Bayes’ Theorem wasn’t lost to history despite the nondescript and somewhat forgettable nature of the discoverer because the idea has real value and in some senses is acting any time someone is trying to rationally make a decision under uncertainty.

Can Bayes’ Theorem Improve Science?

Chivers co-hosts a podcasts called The Studies Show with Stuart Ritchie, author of Science Fictions: How Fraud, Bias, Negligence, and Hype Undermine the Search for Truth. They do frequent deep dives on particularly fraught areas of the scientific literature and evaluate the strengths and weakness of certain scientific claims. A disturbing takeaway from regularly listening to this type of dissection of science under meta-scientists’ scalpels is that there is a lot of junk out there. Moreover, the junk persists for particular systematic reasons. Arguably, one of those reasons concerns the approach of research scientists to probability theory.

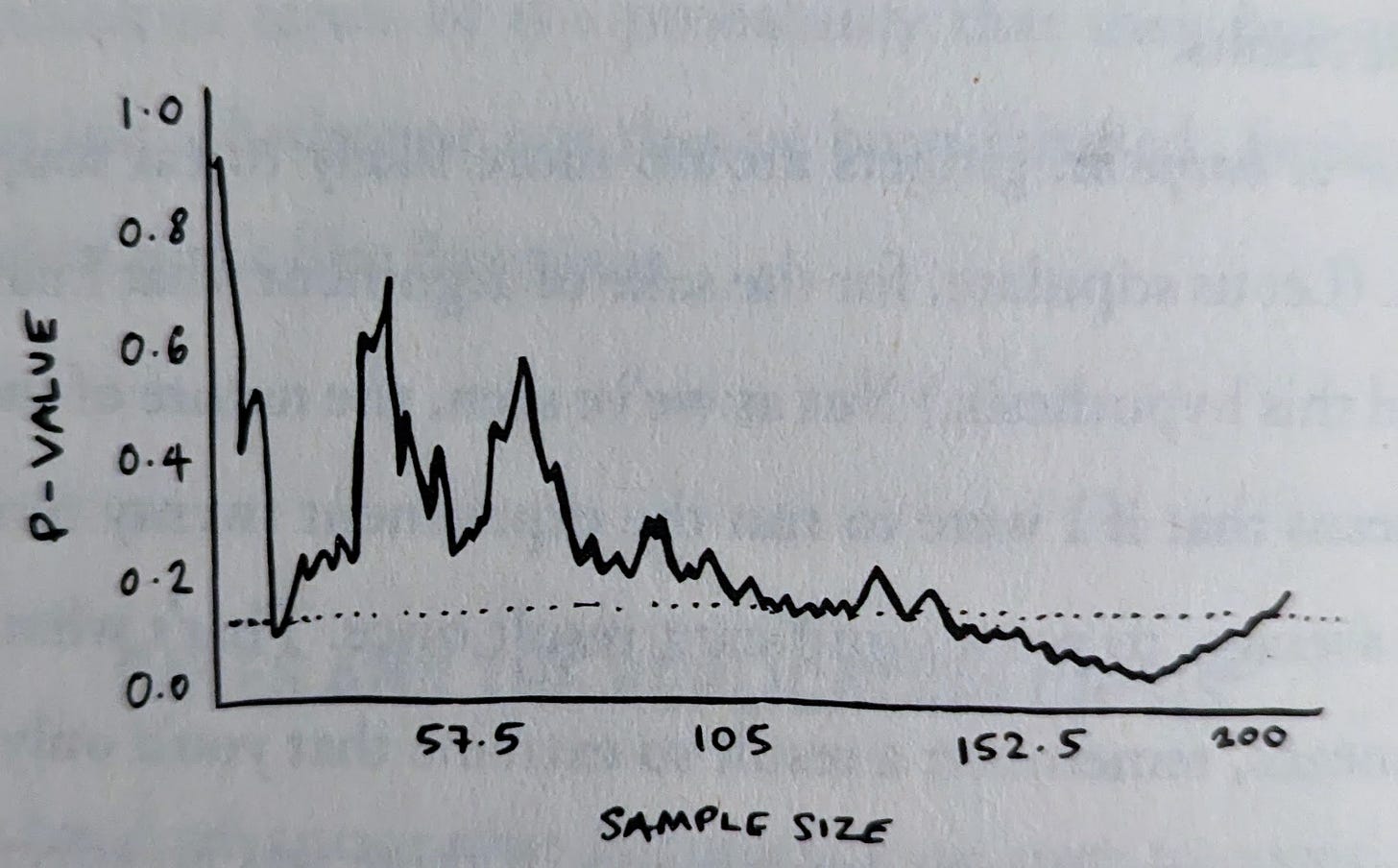

Scientists tend to use an approach called Frequentism. A frequentist tests a hypothesis of no difference, the null hypothesis, and evaluates experimental results with a p-value. The p-value describes how likely the experimental results are given the truth of the null hypothesis. If the p-value is less than the arbitrary threshold of 0.05, then the null can be provisionally rejected in favor of the researcher’s alternative hypothesis. The issues arise both with the p-value itself and the p-value threshold. For the threshold, not only does it encourage scientists to intentionally and unintentionally game themselves under the arbitrary limit in embarrassing ways, but it is also inappropriately high for many experimental questions/designs. There are cases, like with genome-wide association studies (GWAS), where scientists have learned to set a more stringent alpha (the p-value threshold), but this doesn’t entirely solve the Goodhart's law-like phenomena at play with p-values: HARKing, p-hacking, file drawer effect, etc.

After Chivers efficiently describes the systemic issues plaguing research practices, he outlines the possible ways that a Bayesian conversion could improve things. I found myself pretty excited by what Bayesianism can offer science. There are two big things it could do: 1) it may disrupt the “publish-or-perish” incentives and novelty bias of publishers, and 2) it compels scientists and the audiences of scientists to recognize the uncertainty built into empirical claims. Bayesianism focuses on the magnitude of effect, the real thing of interest in most research, and presents these data as a probability distribution. It removes the binary, gameable p-value threshold and enforces a type of epistemic hygiene. There is a lot about contemporary research science that has become sclerotic, I think Chivers’ proposals related to injecting Bayesian methods into science would be a welcome shake-up. He acknowledges that Bayesianism is not a panacea. It is not even always the best approach for all questions. Nonetheless, it does offer solutions that we shouldn’t just ignore.

Is Bayes in the Brain?

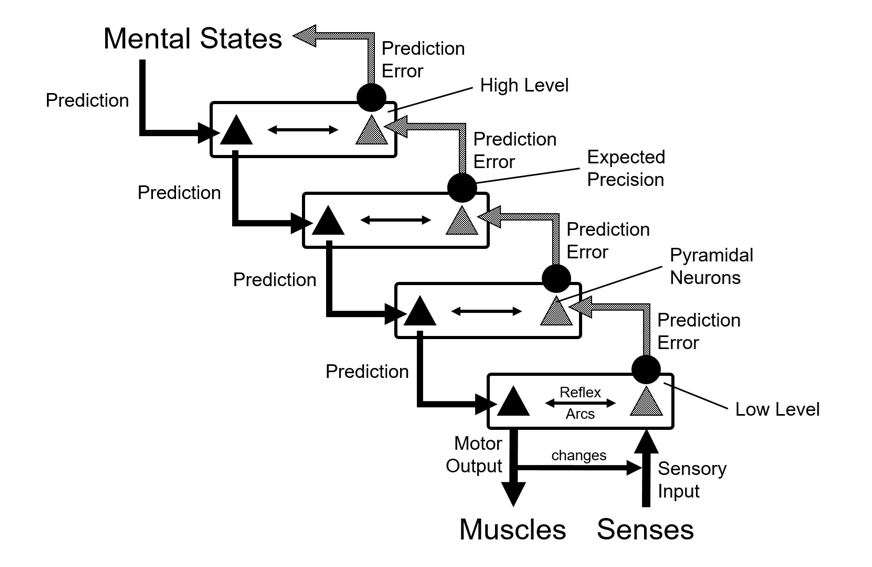

Many great philosophers, the first being Plato, have stumbled across the realization that our perceptual system is not simply delivering the world as is to our brain. In fact, our brain is taking inputs in through the sensory nervous system and then constructing conscious experiences; it’s “reality as controlled hallucination.” Thus, our brains almost inexorably functions as some kind of prediction machine (at least in part). Chivers alleges that these processes must fundamentally be Bayesian.

Throughout Everything is Predictable, Chivers is prone to making the borders of what exactly is and isn’t Bayesian a bit blurry. The term nearly functions as a stand-in for all inferential thinking. I am fine with this capacious definition, but I’m sure motivated specialists may quibble. Nonetheless, Chivers is still getting at something. The brain is having to leverage imperfect information using a set of smart shortcuts and assumptions to get a good grasp on the external world. Bayesianism does offer a simple way to model what the brain is likely doing. The brain appears to have a top-down (the prior) model of the world and adjusts that model by constantly drawing upon bottom-up inputs (the likelihood) to reduce errors in its predictions about reality. The cataloging of various illusions that our brain is susceptible to lends credences to this model of neurobiology and consciousness - sometimes called predictive processing.

I don’t have a problem with conceiving of the brain as Bayesian. I think in a general sense it is true enough, especially for sensory-motor packages. Even as a casual way of understanding consciousness, I think it is better than the many alternatives. But I think there are places where this breaks down. Is the way in which the brain generates thoughts Bayesian? Are meta-cognitive processes in the brain Bayesian? Is all learning Bayesian? If we were to follow all these and other lines of thought about brain biology the Bayesian frame woulld have to be stretched ever wider, perhaps to the point of meaninglessness.

I see at least one clear exception to the Bayesian brain model: recursion. There are recursive aspects of both the architecture of the brain and the nature of consciousness. Recursion, a process referring to itself repeatedly, seems distinct from a Bayesian process, which is iterative but linear, and just as important to consciousness. Recursion and Bayesian principles can be integrated to great effect, but I think they’re distinct things. I raise this point out of a special interest in consciousness and to admit that my prior is the phenomenon is beyond Bayesian borders. If I were to build a more complex model of consciousness, it would include features in additions to Bayesian processing. This isn’t a failing on the part of the book. The subject is tangential to the core ideas on offer. Plus, like a good Bayesian, Chivers’ commentary on the brain demonstrates simple models have redeeming qualities of their own and can still be powerful predictors even when they have flaws.

The Best Bayes

So far, I hope it is clear just how engaging and interesting the content of Everything is Predictable is.8 Bayes’ Theorem and more broadly the concepts central to it and emerging from it are incredibly important to both our daily lives and to the highest levels of truth-seeking. Chivers cheerfully does the yeoman’s work of detailing in very accessible fashion just how true this is. It’s a pithy work too. I think this is a rare feat when talking about statistics and probability.

If being thoughtful, concise, and stimulating isn’t enough, Everything is Predictable also provides a beautiful balance between conceptual and practical content. I think this kind of balance is often missing from works of science communication. They’re either all practical or technical in which case they are literally textbooks or written for specialists. Or they’re heady, dense conceptual works without practical value to most readers or superficial works that offer hyped-up simplifications to tantalize and entertain credulous non-specialists. Chivers threads the needle with this book, I really appreciate how carefully and simply he walks readers through examples and applied uses of Bayes’ Theorem. This practical content also sits alongside a succint tour of an intellectual debate that is still raging today (Frequentism vs Bayesianism), concretizing many of the abstract and abstruse ideas. I don’t think one could ask much more of a book on Bayes.

If my prior is wrong here, please forgive me and feel free to whinge at me. I’m swimming in a section of public discourse where casual invocation of Bayesian thought are common.

Part of what makes Chivers’ book so great is that he usually provides several examples to illustrate conceptual points. It can be somewhat repetitive, but once you get things down, you can easily skip over the extra examples.

Referring here to the Rationalist and Effective Altruist communities who are very found of Bayes’ Theorem.

I have a PhD in genomic medicine where I studied the effects of variation in the gene PTEN.

We use something called Frequentist approach, where we test for the probability (p-value) of seeing our measured results from an experiment assuming a hypothesis of no difference.

There is a sometimes quite heated debate between Bayesian and Frequentist camps, which we will return to later.

This of course is a very intuitive insight but is still fundamental to sampling-based statistics. It is the idea that a larger sample will provide a more representative picture of the theoretical true population. More formally stated: the accuracy of one’s estimate grows in proportion to the square root of the sample size.

I can’t and won’t cover everything in the book here. Plus, readers deserve to be pleasantly surprised if they choose to pick this one up. The portions of the book my reviews omits includes what Bayes does for decision theory and quotidian life.

Nice intro to a very important topic.

If anyone is interested in looking at the next layer in how our brains may be functioning, I would highly recommend taking a look at Karl Friston's Free Energy Principle / Active Inference. It is not only a theory of brain function, but possibly even that of all of life. I have written a quick intro to it here: https://medium.com/@vinbhalerao/the-free-energy-principle-the-ultimate-theory-of-life-cade09130a06

Thanks for that review. I think that the idea that the brain act like a Bayesian optimal decision maker in many situations is a useful working assumption. More useful in my opinion that lots of alternatives. Having said that, a purely Bayesian approach would be difficult because the world contains surprises. Savage's foundations for Bayesianism were explicitly for a "small world" where there are no unknown unknowns.